The Numbers Don't Lie

Financing Generative AI is Revealing Its Cracks. Will this Bubble Burst?

Who am I? I’m a writer who has contributed to Forbes, HuffPost, and Grit Daily. I am also a strategist and entrepreneur who has worked in data privacy for the last 10 years. Through my time in the early days of Yahoo!, the rise of social media, and the shift to data monetization, I’ve become a tech ethicist. These days, I am motivated to expose the glitches in the trillion-dollar AI industry. System Malfunction is my foray into what these glitches mean for all of us. My posts are free. I hope you enjoy!

I have been following the rise of LLMs and how each of the Big frontier models has attempted to accelerate generative AI technology and claim it as the road to AGI, one that will reshape humanity as we know it. But I don’t buy it, nor do many others. The fact is, the cracks in this plan are beginning to show… little by little.

Jing Hu debunked research findings that Generative AI was killing entry-level jobs. In March 2023, Goldman Sachs predicted that AI would destroy or degrade 300 million full-time jobs. What Hu discovered was that the job declines, particularly from college graduates, were not the result of AI, but rather an inflation surge driven by the pandemic supply and demand imbalances, and marked the start of the Federal Reserve’s rate hike, which drove up the cost of capital, leading to cost-cutting measures that included the firing of juniors.

Hu mentions that since 1980, every major economic shock has resulted in “1) disproportionate cuts to entry-level hiring, 2) permanent downward shifts in youth labour force participation, 3) long-term wage scarring for affected cohorts and 4) failure to recover to pre-shock hiring levels even during expansions.”

2025 was also supposed to be the year when adoption would soar—in fact, the opposite was true. The recent McKinsey Report on the State of AI in 2025 found that the technology is still in the early stages of adoption: “Nearly two-thirds of respondents say their organizations have not yet begun scaling AI across the enterprise.” In addition, the curiosity with agents remains just that, as 62% indicate they are still experimenting with the technology.

Bloomberg recently reported, “Sam Altman’s ‘Last Resort’ for ChatGPT Looks a Lot Like Facebook,” burning through about $8 billion of cash last year and “seems desperate for revenue.” The company has now announced that ads are coming to ChatGPT. Data monetization is the road to privacy hell. As noted in her most recent post, she starts to expose Sam Altman’s plan to learn everything about each of us. Right now, the priority for these frontier models is survival.

There are prominent researchers, Yan LeCun, Demis Hassabis, who say LLMs and the path move us forward to Altman’s holy grail of AGI are dead:

Gary Marcus said this:

In the early days of AI, people took cognitive science seriously. In the last several years, the field of AI has been taken over by people who do a form of statistical learning that owes little to what humans do. The field's leaders have been dismissive of almost everything except a small set of techniques. And they did this with the hypothesis that scaling would get to AGI. And I don’t think it has. And so, we should go back to thinking about what cognitive science can teach us.”

Generative AI, By the $$

The models have failed to deliver on their promises. That is one signal.

The second signal? Significant investments in data centers to scale compute.

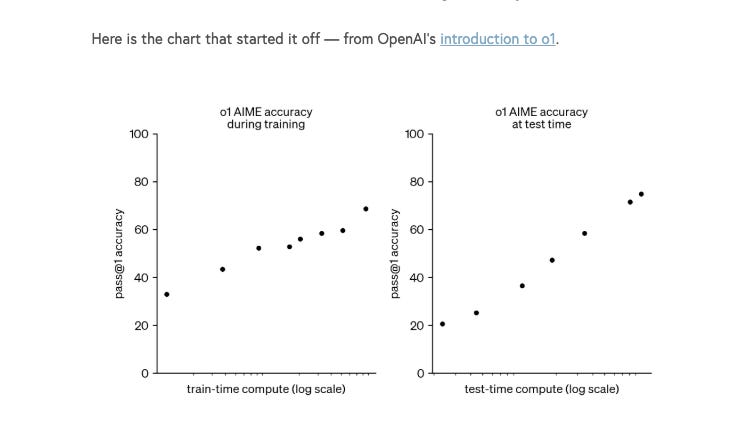

Since 2023, both Jensen Huang and Sam Alman have been touting the need to invest in compute. The two charts below show that improving model performance required scaling inference compute (first introduced in OpenAI’s o1 introduction). The excitement stemmed from the belief that more computing (in terms of financial costs and energy use) would produce smarter models.

This purported law of scaling inference compute towards more intelligent models would mean that “compute would need to increase exponentially in order to keep making constant progress.” Toby Ord explains,

“In computer science, exponentially increasing costs are often used as a yardstick for saying a problem is intractable,” or uncontrollable.

This has led to massive investments in data centers (Meta: $600 million; OpenAI/SoftBank: $1 billion), which will now compete with cities for energy. The demand for compute is largely uncertain, hence risky, and directly impacts how much capital to allocate to building these massive and expensive infrastructures.

Greg Crennan, Chief Marketing Strategist and Founder of The Coastal Journal has been following the money during the Generative AI mania, and when it became a bubble, he looked into the financials of Big Tech and frontier models to determine whether they could sustain growth—artificial or organic—as Crennan states,

Much of that information can be found in their balance sheets, debt levels, demand, and CapEx investments… The issue is that these companies—and what they’ve learned over the years—is that what they’re doing may not actually be illegal, so they’ve found ways to push their limits all the way to that red line before crossing illegal activities. That fine line is very important.

Corey Doctorow, a famous journalist and advocate for digital rights and an open internet, said this recently,

While you are growing to domination, the market loves you, but once you achieve dominance, the market lops 75% or more off your value in a single stroke if they do not trust your pricing power. Which is why growth-stock companies are always desperately pumping up one bubble or another, spending billions to hype the pivot.”

The primary goal is to keep the market convinced that your company will continue to grow and to remain convinced until the bubble comes along.”

Crennan agrees that Big Tech is trying to convince us of this massive growth driven by Generative AI and the significant capital expenditures they’ve undertaken.

“The issue is that the cash flow was significant at one point, but is now being diverted towards expensive CapX. Investors are now questioning companies about the returns from these investments. No one has been able to show how to make money from them, and towards the end of 2025, investors were concerned that their returns would not materialize.”

Big Tech Stock Performance Warning

Coastal Journal posted the following

( PLTR -1.85%↓ , TSLA 1.01%↑ , NVDA -0.48%↓ , AMD -0.01%↓ )

Crennan added that this plateau phase, where this stock price is really the same, eg, Nvidia, seven months before, but will experience relative weekly ebbs and flows. People are waiting to get more information. How will this bubble break? Crennan says,

“The thing investors still refuse to say out loud is this: the most dangerous bubble stocks don’t collapse because the business goes to $0.00. They collapse because the valuation was never grounded in a world where anything can go wrong.”

Crennan explains that when the market is flooded with liquidity and interest rates are relatively low, investors seek a return on investment. And when it comes to technology, and you see the shiniest object in the room, everyone rushes in to buy it because they think it’s going to get a massive return.

I urge you to read Coastal Journal’s assessment of each of these stocks. I’ll leave the coles-notes takeaway from their post for each company:

PLTR -1.85%↓ - Palantir is the most fragile stock in the market. Not because the business is fake, but because the price assumes a future with zero tolerance for reality.” Michael Burry ‘s recent put positions on PLTR “extending ~two years out.. with understanding that bubbles don’t burst on schedule; they fracture when belief collides with a single, ordinary disappointment.”

TSLA 1.01%↑ - Tesla is valued like a “sci-fi monopoly.” It delivered fewer vehicles ↓ , 15.6% year over year, and two consecutive annual declines. This is a sign that the company is in the mature phase of the EV industry, where competition is affecting its business. Musk is banking on the Robotaxi as its revenue saviour — and right now it’s a valuation risk.

AMD -0.01%↓ - AMD, Nvidia’s rival, gross margins sit in the low-50% range - “AI narrative is still expectation-heavy and income-statement-light.” Revenue is up ~28% year over year, but receivables rose higher at 60%. This is a sign that there is less cash on hand and delayed sales —” a sign of stress, not strength.”

NVDA -0.48%↓ - We’ll spend time on this below

Oracle’s disturbing performance

One of the companies Coastal Journal had been tracking was Oracle, a cloud provider/hyperscaler in the Generative AI Tech space. Crennan noted this:

Back in September [2025], it was really interesting to me because, after they [Oracle] reported earnings, their stock was up in the after-hours session by about 20%. This is a big mega-cap company. I’ve seen 5%-10% after-hours surges, so this was an immediate red flag to me. That’s when I looked into their earnings statement and found out they were losing money and their debt was going through the roof. I questioned why this was happening. Then I looked into everything… exposing their debt issues. And suddenly, from that moment, the stock’s down, I think ~30-40%.”

Crennan noted that investors are now questioning the value of these companies and whether they will default. He adds that currently, credit default swaps (CDS - defined as a financial vehicle whereby the seller of the CDS will compensate the buyer in the event of a borrower's default on their debt) are rising because companies like Oracle are spending billions of dollars they will be unable to generate a return that will satisfy investors. He adds that investors in 2026 will question these activities with greater scrutiny.

The Price/Earnings Ratio, which measures a company’s share price relative to its earnings per share, indicates what investors are willing to pay for every dollar of the company’s earnings. Right now, Crennan notes

Investors are paying a premium for companies to grow their earnings, which means higher prices and profits. If those revenues and profits don’t materialize, that signals the companies are overvalued at today’s prices, and the market will correct that overvaluation, which could be very brutal.

Efficiency Does Not Translate into Profitability

Yahoo! Finance recently posted that, according to internal company documents, OpenAI is predicted to lose $14 billion in 2026. Those losses, according to The Information, will continue to grow to $44 billion by 2029 before the company starts to turn a profit.

OpenAI is losing significant amounts of money, largely because the increased usage of its Large Language Models (LLMs) drives up, rather than decreases, costs. While more users usually improve economies of scale, the immense compute power required to run AI models means that higher usage directly translates to higher losses.

“The 'Usage' Paradox: CEO Sam Altman admitted that OpenAI loses money on its $200/month 'Pro' subscriptions because users are leveraging the models for intensive tasks more than expected.”

This was explained earlier in Toby Ord’s chart. What’s clear is that OpenAI hasn’t quite figured out how to price effectively, because they’re not realizing economies of scale.

Crennan argued that in Feb 2025, DeepSeek was found to be far more efficient, less costly, and using less compute than ChatGPT for similar prompt queries. DeepSeek LLM activates only a subset of the 671 billion total parameters (Mixture-of-Experts architecture) per query, compared to ChatGPT, which uses a traditional transformer model that applies all its knowledge to all tasks, making it far more costly and less efficient. He added that if models themselves are more efficient, then the question arises whether we need these data centers.

I also argue that, regardless of model efficiency, costs will continue to rise because efficiency drives the commodification of technology, which will undoubtedly lead to much stronger user adoption, thereby raising costs. At this rate, “free users” subsidized through OpenAI’s new ad model may do little to sufficiently cover investors’ returns.

I reference a recent post about Jevons Paradox:

The Dreaded Circular Investment Schemes to Fool Investors

It’s been reported that the AI labs (OpenAI, Anthropic), the infrastructure and cloud providers, chip providers, and tech companies buying services from the three are entangled in a web of circular financing.

NVIDIA announced plans to invest $100 billion in OpenAI over the next few years. OpenAI will use Nvidia’s investment to buy Nvidia’s own chips to power its new data centres. OpenAI has agreed to spend $300 billion to purchase data centre capacity from Oracle, which is now rushing to build new data centres packed with Nvidia chips.

NVIDIA invests in Open AI→ Open AI rents compute from Oracle → and Oracle buys NVIDIA’s chips –˘ NVIDIA records huge sales.

Crennan explains Big Tech behaviour this way:

The circular financing with Generative AI is huge. Several companies are involved in it. Everyone’s taking money out of their left pocket and putting it in their right pocket — circular or vendor financing.

He parallels this to the first tech bubble. Fibre Optics investment was driven by the explosive demand for internet bandwidth prior to the DotCom Crash in 1999. When the dot-com bubble burst in 2001, the industry faced a severe crash. 0% of the fibre laid during the boom remained unused because overcapacity far exceeded actual demand.

As Greg Crennan states:

What people forgot about tech bubble 1.0: companies were laying fibre-optic cable needed for the future and developing the internet infrastructure, which was true at the time. Everyone got excited, and the valuations of these companies rose so high that, as soon as growth slowed, everything basically collapsed.

What’s happening today with data centres is similar, but a little more refined now. So, it’s harder to catch when you’re looking at accounting data. It’s very hard to pinpoint and find these circular finance deals. But the issue is that when a company like OpenAI announces it will spend $100 billion with AMD—over ten years and with required benchmarks—the headlines say, “AMD is getting $100 billion from OpenAI.” That is not the real issue.

He adds that the value of these companies skyrockets, pricing in growth that may not materialize or may not materialize at the level originally announced.

In tech bubble 1.0, companies resorted to channel stuffing—pushing their products without collecting cash flows. Crennan adds that, in the short term, their earnings look better, but eventually the lag in cash catches up with them. Those are the warning signs we must heed.

That’s where these issues are in markets today:

…you get these massive valuations, and all of a sudden, you get this deterioration in fundamentals, where you’re looking at these companies making money, and realize that the numbers don’t justify their market caps or valuations.

NVIDIA and CoreWeave

The most prominent case of circular financing is between NVIDIA and CoreWeave. NVIDIA is a maker of GPU chips to power computing. CoreWeave is a cloud computing company that provides the GPU-powered infrastructure for AI workloads. CoreWeave is a client of NVIDIA.

Here are the timelines :

As of September, 2025, CoreWeave had $18.81 billion in debt obligations

September, 2025, NVIDIA announced a $6.3 billion deal with CoreWeave,” … ensuring that the chip leader will buy any cloud capacity that CoreWeave is unable to sell to customers.”

NVIDIA just announced (January, 2026) a new $2 billion investment in CoreWeave. What Motley Fool is reporting: “It helps funnel GPU demand to Nvidia, and protects its market share.”

Greg Crennan is skeptical as he says:

"If demand for data centers is so big, CoreWeave wouldn't need NVIDIA to guarantee compute power... If demand were so strong, their cash/sales would be soaring, and their debt ratios would be shrinking, but the fact is, they're having trouble establishing demand. If we’re having demand issues now that NVIDIA has to backstop CoreWeave, that is a worrying sign. If CoreWeave were to go out of business, then NVIDIA would lose one of its largest customers. This whole thing would collapse on this whole AI data center bubble. "

Similar patterns from 25 years ago:

Prior to the dot-com crash, companies had become overly valued on promises of unrealistic growth and profitability. They were also highly leveraged and had taken on huge debt to finance their expansions.

And then, when growth slows, demand fails to materialize, and bad things happen. Will 2026 be the year when we start to see a similar fate in this Generative AI bubble?

Hessie does a great job exposing the fragility in Big Tech’s core assumptions, and it feels like we’re one hiccup away from a meaningful correction. As you point out, LLMs invert the usual economies of scale; greater usage raises costs for providers rather than lowering them. At the same time, more researchers are joining Gary Marcus in questioning whether LLMs and brute-force scaling are a viable path to AGI. What’s most striking is that U.S. tech companies appear to be betting the farm on brittle assumptions. Companies once defined by abundant free cash flow are now pouring capital into massive build-outs without a clear or proven path to profitability.

I think that Nvidia's backing of Coreweave is both a danger sign and a strong signal that it will backstop data centers that would otherwise be in deeper trouble. I suspect GPU/token demand will grow in 2026 unless someone makes a major advance in edge-device AIs, but how can a data center still loaded with older chips (Nividia is releasing new chips every year now) compete against a center loaded with newer chips?

The only way is if demand outstrips supply. If a data center can't at least recoup its investment then it's done-for, but this fast release cadence makes it bloody tough. Nvidia knows that. At some point, the music stops and somebody is left without a chair to sit in. That's when the there's a danger that the whole Jenga tower comes down (to mix game metaphors).